A few updates: the DGX Spark giveaway, new GPU inference slides, and my Vision AI Course

Third one is the best. Important details on what I've been working on behind the scenes, and what comes next.

Most engineers use AI tools daily.

But very few understand how these systems actually run in production.

That’s the gap I’m working to close with The AI Merge.

Join 9000+ engineers learning how to build real-world AI systems.

Here are a few updates from what I’ve been building recently.

My publishing cadence slowed down a bit recently. I’ve been deep in a few projects that are taking more time than expected, but I’m working toward a more consistent rhythm again.

In the meantime, here’s what I’ve been building for you.

I - The DGX Spark giveaway is done

Last year, I partnered with NVIDIA as an AI Educator.

Through that, I’ve been getting access to briefings, slack channels, meetings, slides, new models and releases to test the capabilities before they hit GA. All these allowed me to stay in close touch with real AI Engineers at NVIDIA, learn a ton and gather feedback.

One result of this collaboration was the DGX Spark giveaway I organized with NVIDIA, giving one of my subscribers a chance to win a brand new Founder’s Edition DGX Spark.

After this year’s GTC, I ran the raffle and 112 participants have joined in total. After reviewing all applications, I selected a winner at random to keep the process fair and transparent.

Just recently, I’ve finished the conversations with NVIDIA to arrange shipping, got in touch with the winner. Honestly, hearing about what he plans to build was easily the best part of the entire process.

Thank you to everyone who joined. I genuinely appreciate the interest and the support, and will make sure to continue providing value & hopefully organize more of these in the future.

Congrats to the winner and to everyone who joined: thank you again for taking part 💚

II - 70% Ready on the Slide Deck on GPUs & AI

This is the project that’s been consuming most of my time recently, but I’m really excited about this one.

I’m working on a large set of slides to explain, in real detail, the path from the first GPU in 1999 to how GPUs are actually used at scale today for AI Training & Inference.

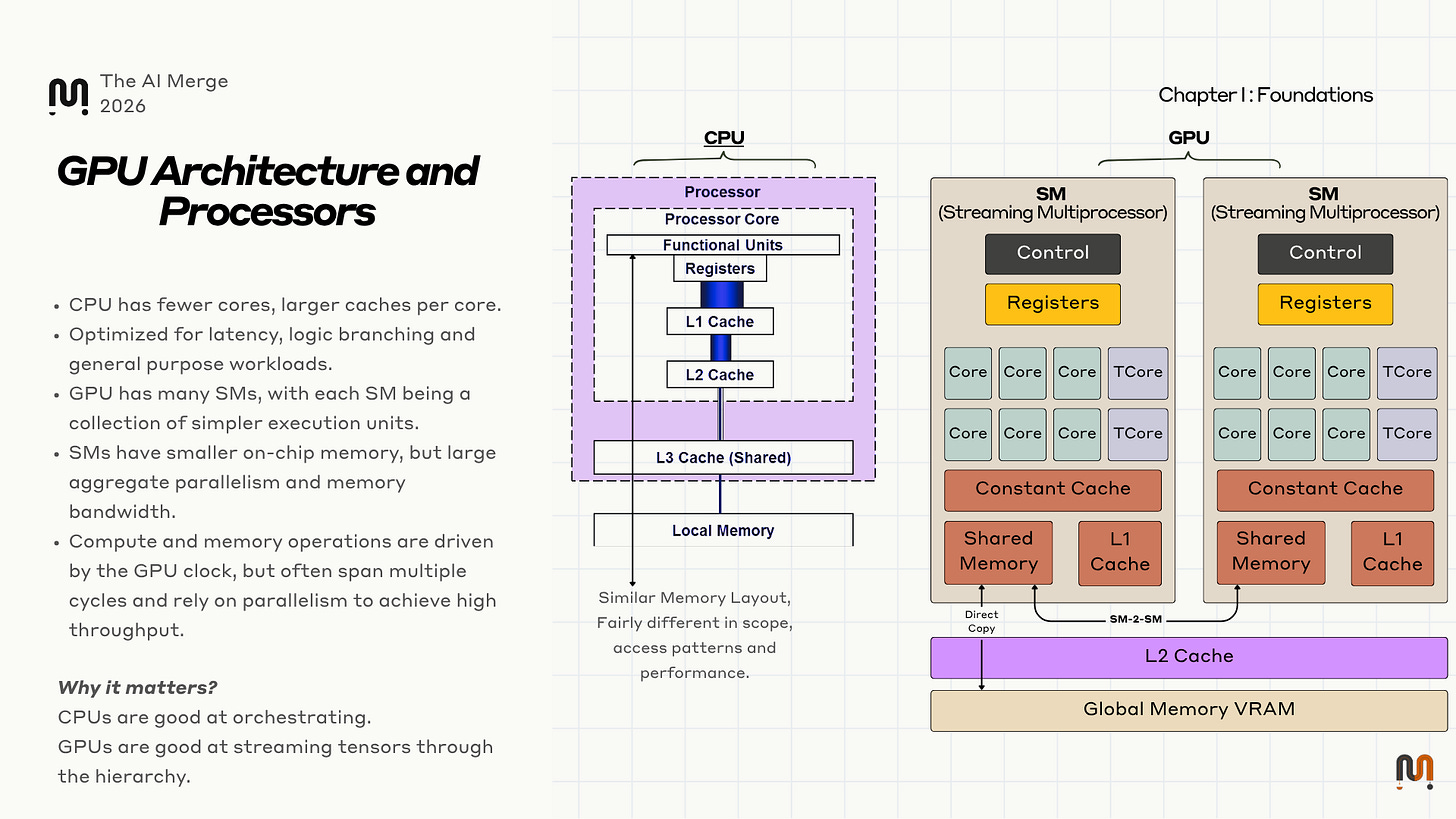

Most content about GPUs skips a critical layer. It tells you that GPUs are important, then skips straight to cluster-scale diagrams and benchmark charts. That leaves a gap in the middle, which I’m aiming to close with this presentation.

I’m currently 30 slides into a 42-slide deep dive, with detailed explanations and hand-crafted diagrams throughout. In the meanwhile, I’ll attach here a sample for you to get an idea on what you’ll learn.

We’ll go through a lot of topics, some of them being:

GPU history, from the Fixed Function Hardware, to the first GPU in 1999

Video Games like Doom93 and Quake, OpenGL and DirectX

The Flynn Taxonomy, SIMD/SIMT/CUDA and how GPUs work.

Memory Types, CPU vs GPU, Hierarchy

How GPUs communicate inside an AI Node

How GPUs communicate cross AI Nodes

GPUDirect, RDMA, NICs, NVLink, NVSwitch, multi-node communication, and server rack design.

AI Inference Step by Step

Optimizing AI Inference, Parallelism Techniques, KV Cache, Frameworks

…way more topics in the works.

My goal is to make the full stack legible. Not just the model side, but the hardware path, the node design, the interconnects, and the software stack an AI engineer needs in order to reason about inference and compute in the real world.

Stay tuned, you’ll learn a lot and I’ll keep you updated on how I’m planning to go Live.

III - My VisionAI course is getting close

I’m close to wrapping up the build phase and moving into writing the course material itself.

This one would get an article of it’s own, to cover the full length of what’s inside, from Data Collection, MLOps, Edge Inference and Agent Engineering using Google’s A2A Protocol for cross-agent communication and MCP for Agent capabilities and skills.

This course is centered on real system behavior, not toy examples. It’s a fully ground-up implementation of an Agentic System that can monitor live video feeds from different edge trail-cameras in nature, and allow users to chat and generate reports, gather insights and describe captured video events such as:

Action Description - what are the animals doing

Behavior Analytics - feeding, breeding, migration

Timespan Activity - create trails, identify patterns

The sample UI shows video streaming from the edge cameras through WebRTC from, and the Agentic Layer answering to simple queries that involve Routing, Orchestration, and the Camera Inventory and Event Analyzer agent skills.

Here’s a short video showing the dev UI so you can see a glimpse of the product taking shape.

Everything is running locally, production-ready structure with multiple Small Language Models and Vision Models, ranging from 3-8B parameters.

The final shape of the UI, as well as the system’s performance especially regarding the chat window and conversation turns will be improved as I optimize further.

If you’re interested in building real AI systems locally and cloud, not just calling APIs - this will be worth your time.

Closing

Thanks again to everyone who participated in the giveaway, and to everyone who’s subscribed to TheAIMerge. The goal of this publication is simple: help engineers understand how AI systems actually work in production.

That means:

deeper technical breakdowns

real system design

and fewer “toy” examples

If that’s what you’re here for, you’re in the right place.

Make sure you’re subscribed so I can notify you when I organize the live session on the GPU slides. Also follow me here and on LinkedIn for updates and follow-ups on the MAVS course.

More soon, this next batch of content is going to go deep.

That’s all for today. See you next time 👋

Hi @Alex- when will slide deck on the GPU be ready?

Eagerly waiting for 70% Ready on the Slide Deck on GPUs & AI