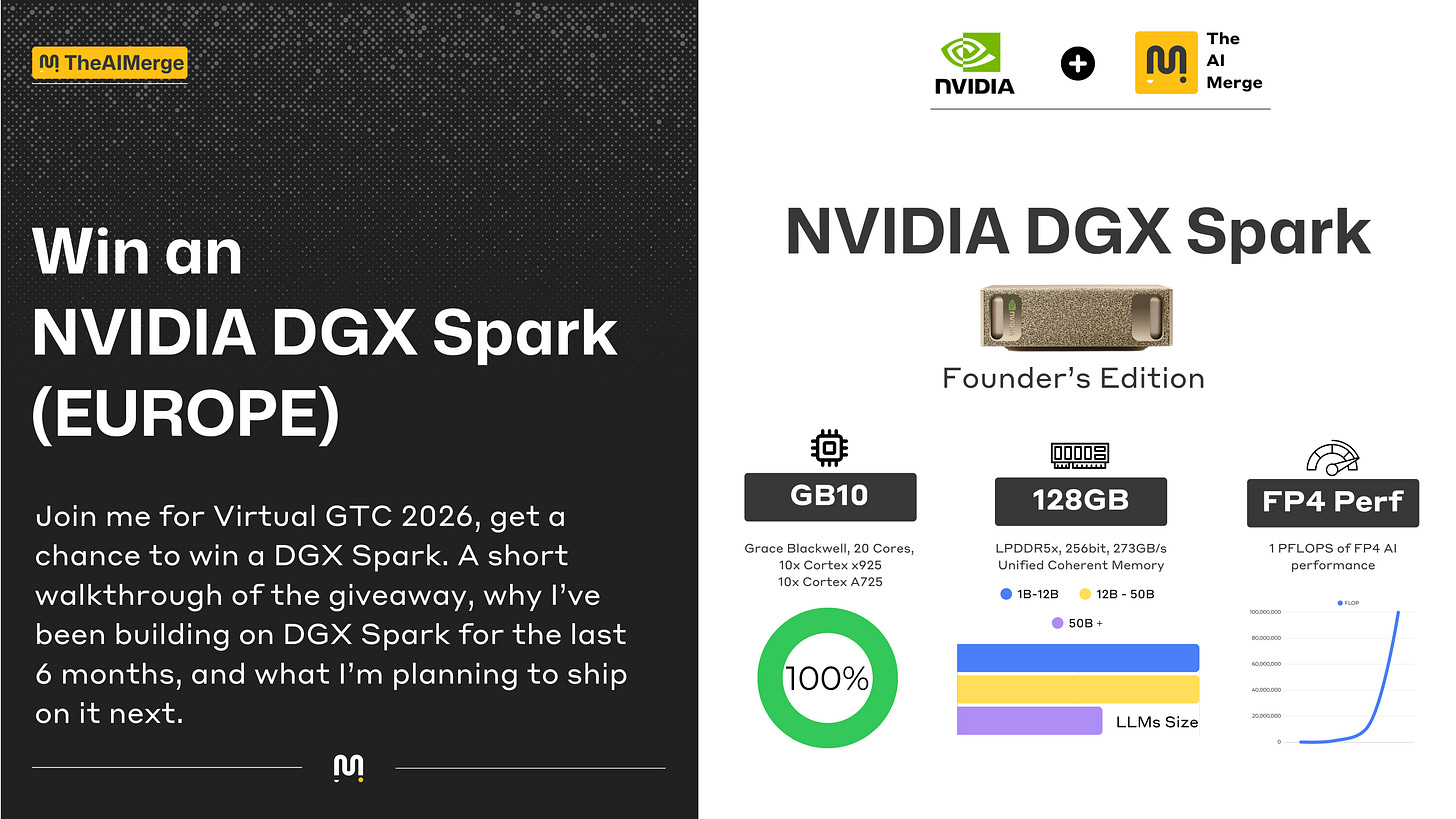

Win an NVIDIA DGX Spark by joining me for Virtual NVIDIA GTC 2026

A short walkthrough of the giveaway, why I’ve been building on DGX Spark for the last 6 months, and what I’m planning to ship on it next.

In this article, you’ll learn about:

How the giveaway works (and what counts as an entry)

A few Virtual GTC 2026 sessions I find interesting

What the DGX Spark is good for when you’re building AI systems locally

What I’ve been working on with it (and why local iteration still matters)

Where to find my DGX Spark unboxing + capabilities breakdown

If you’ve deployed AI systems, you already know the fastest way to make progress is to shorten the “idea → run → inspect → iterate” loop. AI requires a lot of GPU compute, even for basic experiments.

Cloud is great and gives you the compute, but it costs you every time you want to debug. Local compute on the other hand gives you a different kind of control, especially when you’re iterating on data pipelines, model behavior, and end-to-end latency.

That’s the main reason I’ve enjoyed building on the NVIDIA DGX Spark for the past 6 months. It’s been a very practical machine for AI, learning, prototyping, fine-tuning, and building small-to-mid AI systems without having to rent cloud compute to test small bits of my code.

The surprise: I’m giving away 1× NVIDIA DGX Spark (Europe Only)

For NVIDIA GTC 2026 (March 16–19), I’m doing a giveaway for my European audience. If you join me for Virtual GTC, you’ll have a chance to win a DGX Spark.

🎁 Courtesy of NVIDIA.

The Virtual GTC is free. And the entry rules are simple (details below).

How to enter the DGX Spark giveaway

Register for GTC 2026 using my link. https://nvda.ws/4qTY2Bn

Attend at least 1 virtual session (Jensen’s Keynote does not count)

Be a free subscriber to this newsletter. Subscribe

Fill out my giveaway form after attending Google Form

your name

your country (Europe only)

your email

which session you attended

a screenshot showing you attended that session

your favorite takeaway from that session

Please read the form now (1min), to learn the extra steps required.

Quick FAQ:

Is Virtual GTC free?

Yes.Does the keynote count?

No. To qualify, you need to attend at least one virtual session (not the keynote).How do I fill in the form?

You’ll have to register with my link, attend a virtual session, take a screenshot of your attendance in the session, and fill-in the Google Form.I subscribed after attending a session, is that fine?

Yes. As long as you’re subscribed by the time you submit the giveaway form.Do I have to be a paid subscriber?

Nope, free subscription is enough.Is this Europe Only?

Yes, this giveaway is for my European audience.Do I need to attend a specific session?

No, any eligible virtual session counts (as long as it’s not the keynote).How do you verify subscription?

The giveaway form will ask for the email you used to subscribe, so I can confirm eligibility.

A few interesting Virtual GTC sessions

Here are a few sessions that caught my eye, maybe you’ll find them interesting as well.

AI Factories in Europe: Building the Foundations for Scalable Intelligence S81899

Accelerate AI Through Open-Source Inference S81902

Teach AI to Code in Every Language With NVIDIA NeMo S82306

From Data to Meaning: Vision-Language Models Shaping the Cities of Tomorrow S81867

Your Learning Pathway: Get Certified for Career Success C81544

Behind The Scenes and what I’ve been building on the Spark

To describe it shortly, I’ve been using the Spark as my main AI development machine for a good few months. Before it, I had a PC with an RTX4080 (16GB VRAM) and an M1 Max for building with AI locally.

The Spark, with 128GB of unified memory tops that, allowing me to run multiple models and heavy processing workloads without looking at nvtop or GPU load charts.

Below you can find the main threads I’ve been tested and worked on with the Spark:

Local Finetuning - going through the 30+ Spark Playbooks and Unsloth Tutorials.

Local Inference - with Ollama, LMStudio and llama.cpp

Multi Agent Systems - with SLMs (Qwen3.5, GPT-OSS-20B/120B, and NVIDIA Nemotron Nano 30B-A3B)

AI Systems - with Inference, Application, UI & Backend as docker stacks, closely mirroring setups you’d see in real deployments.

Edge AI - with Vision Models, Audio, LLMs and pretty much any multimodal pipelines and workflows.

Agentic AI - MCP, A2A, PydanticAI, LangGraph, LangSmith, Opik.

RAG & Multimodal RAG - a few small projects, mainly on VSS (Video Search and Summarization)

Computer Vision - my old passion, running any workload from Object Detection, Multi-Camera Tracking, Instance Segmentation etc.

Image/Video Generation - ComfyUI (Spark Playbooks)

Bottom line is, you can do a lot with the Spark, from tiny PoC projects up to solid AI Systems that you can build & validate, and then scale to real heavy workloads in cloud.

If you’re curious on how the Spark looks like, it’s hardware and architecture details and what it can do. 👇

Wrap-up

Register for Virtual GTC with my link

Attend at least one virtual session (not the keynote)

Submit the giveaway form attached in this article

Make sure you’re a free subscriber so I can reach you if you win

I’ll announce the winner shortly after GTC ends. In the meantime, if you want the practical breakdown of what the Spark can do (and what I’m building on it), the unboxing/capabilities article is linked above.

Register here: https://nvda.ws/4qTY2Bn

Good luck! 🫶

Alex